|

Let’s say you want to buy a new car. Now, you aren’t a car expert, but you have a general idea of features you want in your slick new whip and you can find a market-determined price for the car you want online. Every day that your new car gets you from point A to point B without violently exploding you’ll know that you made a good decision. This is what cars are like.

Drugs are not like cars. Drugs are complicated little molecules pressed into tablets that a doctor tells you to take one, two, three times per day, maybe until you die. How much do drugs cost? They cost whatever your pharmacist says they cost. Drug prices are obscured both by a lack of patient drug expertise and the complex negotiations between insurers, manufacturers, pharmacy benefit managers (PBMs) and pharmacies. Because patients cannot easily find a price for a drug it is fair to ask if they are paying too much. Fear not! The government regulates drugs, pharmacies, AND insurance companies. The government recognizes that patients do not know a lot about drugs and steps in to protect them. In fact, one of President Trump’s campaign promises was to lower the out-of-pocket costs for drugs. To that end, the Department of Health and Human Services (HHS) released American Patients First, (APF) Trump’s blueprint to lower drug prices. It’s essentially a series of hypothetical plans that could maybe lower the cost of prescriptions in the United States. The APF correctly points out that consumers asked to pay $50 vs. $10 are 4 times more likely to abandon their prescription at the pharmacy. We want patients to be able to afford their medications and to therefore be healthier. This is an important point to remember: reducing out-of-pocket costs is only useful if it increases health. Let’s see how the APF will make Americans healthier. First we need to understand the justification for this beautiful document. Why are drug prices high? Well one given reason is that the 1990s saw the release of several “blockbuster” drugs that dramatically increased pharmaceutical company revenues. However, many of these drugs lost patent protection in the mid-2000s. In order to maintain constant revenue streams, the APF posits, companies raised prices on other drugs. The Affordable Care Act (ACA) put upward pressure on drug prices in a few ways. First it increased the number of critical-need healthcare facilities that receive mandatory discounts on drugs (340B entities). It also placed taxes on branded prescription drug sales. This was implemented to shift patients and organizations away from using brand-name (read: expensive) drugs when generics are available. To pay these taxes however, drug costs had to go up. All of these justifications for high drug prices establish a pattern: if one person is paying less then the costs shift somewhere else. Someone has to pay. How does the APF plan propose to tackle high out-of-pocket costs? The strategies are presented as a four-point plan. First, it proposes that the US increase competition in pharmaceutical markets. Classic free market stuff right here. One part is a FDA regulatory change which prevents a company from blocking entry of generic competitors into the market. Seems like a straightforward good idea. The other noteworthy idea here is to change how a certain class of expensive injectable drugs, biologics, are billed. This would prevent “a race to the bottom” in biologic pricing which would make the market less attractive for generic competition. Essentially this rule could help keep biologic prices high, to make the market profitable, so there is generic competition, to lower prices. It is difficult to predict if this would work or not. The second objective is to improve government negotiation tools. This part is pretty fleshed out, with 9 different bullet points. However 8 of the 9 points relate to Medicaid or Medicare primarily helping old and/or poor people. Right now drug coverage by Medicare cannot take price into consideration when deciding whether to cover a drug. If the largest insurer in the country (the government) can start negotiating on prices, the market could shift dramatically. However someone has to pay and this may shift prices to private plans. Another goal in this section is the work with the commerce department to address the unfair disparity between drug prices in America and other countries. It is unclear how this would be achieved. The third objective is to create incentives for lower list prices. Drugs have many different prices based on who is paying on them. Companies may be incentivized to raise list prices to increase reimbursement rates since they often only receive a portion of the list price for a drug. However if the drug is not covered by a patient’s plan they could be on the hook for the inflated list price. One of the most widely criticized parts of the APF plan is to include list prices in direct-to-consumer advertising. Since most people do not pay the list price, is it even helpful to include? Probably not. The final objective is to bring down out-of-pocket costs. I thought this was the purpose of the whole document so I was surprised that it is also one of the sub-sections. Both of the proposals here target Medicare Part D, so they may have limited benefits to non-Medicare patients. One proposal is to block “gag clauses” that prevent pharmacies from telling patients when they could save money by not using insurance. While this will indeed lower out of pocket costs for certain prescriptions, the point of insurance is to spread out the costs. The inevitable side effect will be price increases in other prescriptions. The long final portion of the document is a topic by topic list of questions that need to be addressed. Who knew that healthcare was so complicated? There are some good ideas in here that need to be explored like indication-based pricing or outcomes-based contracts. Austin Frakt has a good piece on these here. My favorite question in the section is: “How and by whom should value be determined??” Yes the question in the APF includes the double question marks. This questions really gets to the philosophical crux of the healthcare problem. It should be pretty simple to solve. Here are some other quotes: “Should PBMs be obligated to act solely in the interest of the entity for whom they are managing pharmaceutical benefits?”

As of this writing, none of these policies have been implemented, but the President could instruct the FDA to begin them theoretically whenever. There are still many implications to these policies that are unknown. Each one likely has unintended consequences, as all policies do. The two critical questions we need to ask of our policy makers going forward are:

So uh good luck to us.

0 Comments

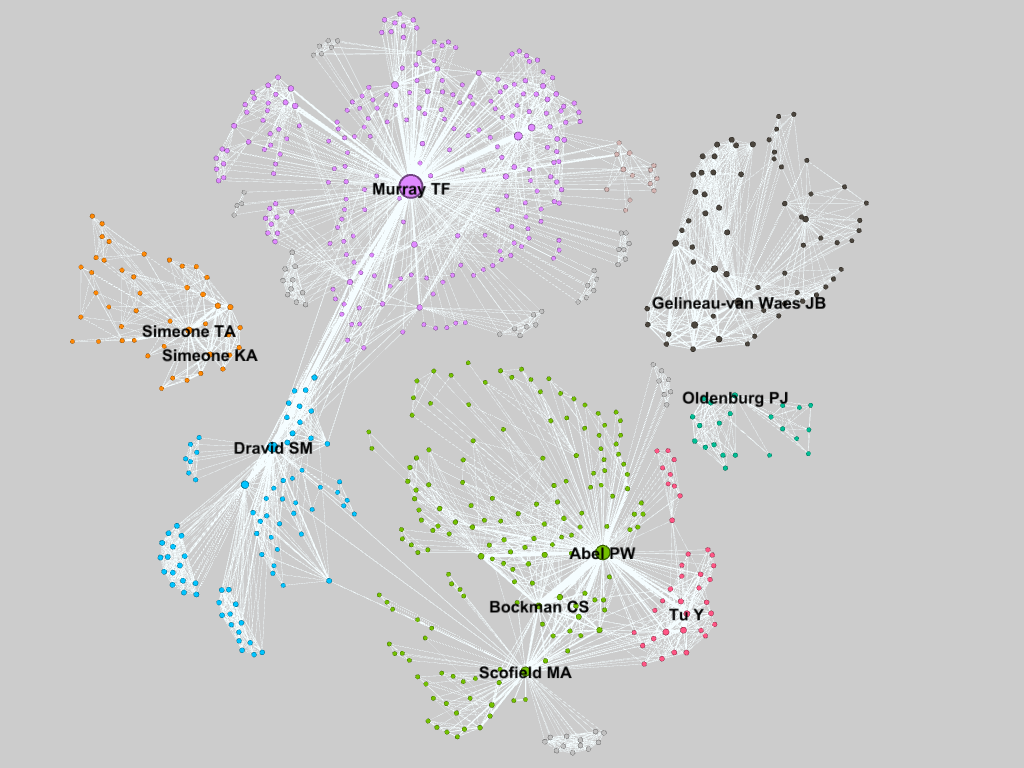

The Department of Pharmacology at Creighton University School of Medicine is small, but mighty. There are only 10 professors or principal investigators (PIs) in the department, but this small size has its advantages. Or at least that is what we tell ourselves. A recent paper in Nature argued that bigger is not always better when it comes to labs and we are putting that to the test. Ideally with a smaller faculty, there would be more collaboration. Everyone knows what everyone else is doing, more or less, so they can more efficiently leverage the various expertise found throughout the department. To measure how interconnected the pharmacology department was I created a network analysis visualization based on who published with whom. Using NCBI’s FLink tool I downloaded a list of the publications in the PubMed database for each PI in the pharmacology department at CU. A quick script in R formatted the authors and created a two-column “edge list” for each author, basically a list of every connection. This was imported into the free, open-sourced network analysis program Gephi which crunched the numbers and produced a stunning map of the connections in the pharmacology dept: Gephi automatically determines similar clusters (seen as different colors) which are unsurprisingly centered on the various PIs in the department since those are the publications I was looking at. Dr. Murray, the department chair, has the most connections, also known as the highest degree, at 292, followed by Dr. Abel. Drs. Dravid and Scofield are ranked 2nd and 3rd respectively for betweenness centrality, after Dr. Murray. They are the gatekeepers that connect Drs. Abel, Bockman, and Tu to Dr. Murray. Each point’s size is proportional to its eigenvalue centrality, similar to Google’s Pagerank metric of importance.

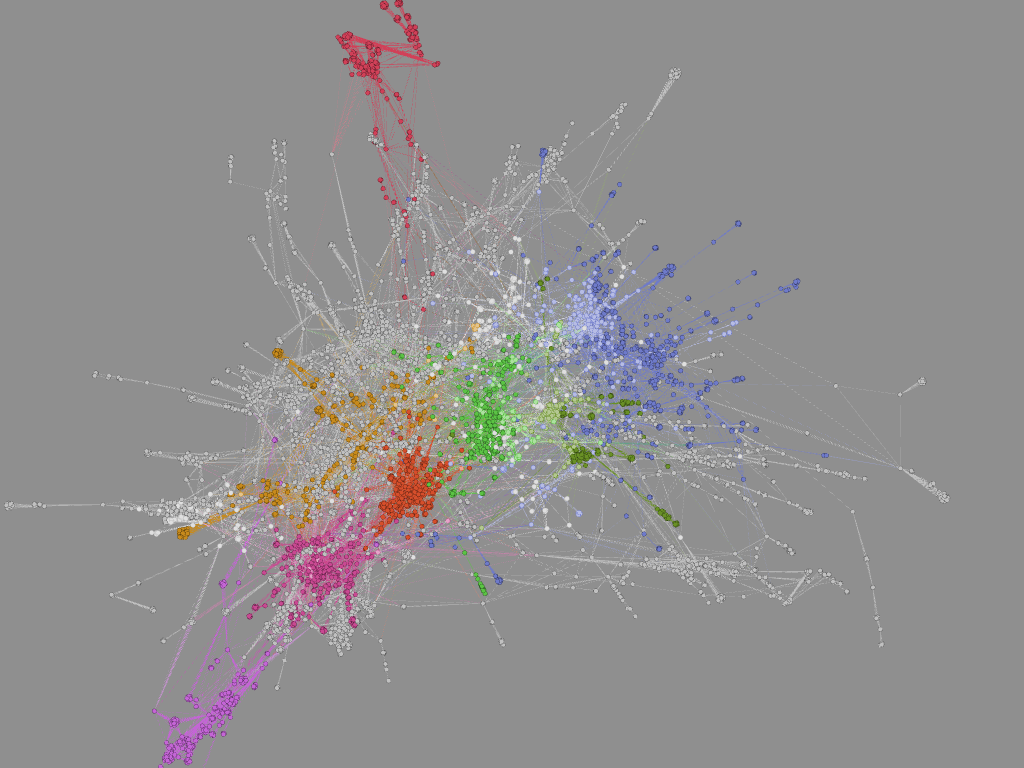

I was a bit surprised at how disperse the department was. 60% of the PIs could be connected, and many have strong relationships. However the rest are floating on their own islands. Dr. Oldenburg is relatively new so this is not surprising. The Simeones (who are married) are closely connected. Also unsurprising. This was a quick and dirty analysis and a few of the finer points slipped through the cracks. Some of the names are common in PubMed (especially Tu). so I did my best to filter what was there and only look at publications affiliated with Creighton. Unfortunately this filters out publications from other institutions by the same author. Also not everyone is attributed the same way on every manuscript. This is especially true for Drs. KA Simeone and Gelineau-Van Waes who have published under different last names, but also because sometimes a middle name is given and sometimes it is omitted. I tried my best to standardize the spellings for each PI, but with over 700 nodes I could not double check every author to ensure there were not duplicates elsewhere. If more than one PI shows up on a paper, that paper may show up under both searches. This should not increase the number of edges, but would affect the “strength” of those connections. The connections are about what I had imagined. The brain people are on one side, everyone else is on the other. Expanding the search to include the papers from coauthors outside of the pharmacology department might discover more interesting connections. Just for fun I went ahead and pulled the data for every paper on PubMed with a Creighton affiliation. I could not even find my department on the visualization without searching for it. It is massive. The breadth of Creighton’s interconnected-ness forces me to marvel at how vast the community of scientists must truly be. So many people working to improve the body of knowledge of the human race. We are really just small bacteria in a very large petri dish. This post was originally hosted on the American Society for Pharmacology and Experimental Therapeutics Blog - PharmTalk. The original post can be found here. Computer Skills for ScientistsThe dawn of the computer age is over. Computers are no longer just hobbyist toys or specialized equipment; they have become an integral part of the scientific process. In fact you are almost certainly reading this on a computer. As science’s reliance on computing grows, so too will its need for able-minded computer users. Graduate school or post-graduate training is not only a time to develop your bench top skills, but also a time to focus on honing the “soft skills” of independent critical thinking, teamwork, networking, and scientific writing. Allow me to propose that you should also spend some of your (limited) time expanding your computing prowess. Streamline Workflow and Save TimeMany commonly used programs, like Excel or ImageJ, allow you to write macros that will repeat a task over and over. Macros are sets of instructions that tell a program to repeat a specific sequence of tasks. Need to perform the same analysis on hundreds of images? A macro might work. Do you need to import, manipulate, and format a series of data sets in Excel? Try a macro. Some require a bit of coding knowledge, while others are able to record a sequence of clicks. You could potentially save yourself hundreds of hours by automating some of your more monotonous work. Take a day to look through your favorite program’s documentation or help files to learn what options are available to you. When in doubt, Google it. Chances are someone has already done something similar. Improve Clarity of Scientific ReportingMistakes are inevitable, but they are a huge problem for data reproducibility. Automated computing tasks help to remove unintended biases in data analysis by essentially “blinding” the researcher leading to increased reproducibility. Journals are pushing for researchers to publish their raw data and program code. Peer review only works though if you, as a trained scientist, can interpret that computational information. Systemic statistics illiteracy is also a common problem that is closely tied to computational proficiency. The more you practice and explore a particular program, the more positive you can be that you are using the appropriate statistical tests. If you are familiar with GraphPad, check out their thorough documentation which can answer most of your stats questions. Open New Doors to Different Career Options Bench top skills make you a good technician. Pick your favorite bench top technique. Are you really good at it? Then chances are high that so is everyone else in your field. Your competition likely has a similar publication record. They probably even took the same basic classes as you. How are you going to stand out in the academic field? Computer skills are, as they say, another tool in your toolbox that may help you stand out from the crowd. Universities are looking for faculty and post-docs that will add something to their team. That something could be working knowledge of a unique (and hopefully useful) computer program. While almost any skill may give you a competitive edge when applying for jobs in general, certain careers are looking for scientists with specific skills. Clinical trials and high throughput screening create monstrous mounds of information. While the clinicians and technicians are running the experiments someone has to translate the results into information that the decision makers can use. Data management tools like SQL, R Tool, SPSS, or SAS are great ways to practice making sense out of huge amounts of information, are broadly applicable, and are sought after skills in industry. “Big data” is an emerging field that may provide a potentially satisfying career path for PhD level scientists. If you are interested in lab management as a career post graduate school then experiences with software like Labguru and Quartzy are good places to start. Because this software can link specific reagents to each protocol they can add reproducibility to your current lab’s data too. Software like these allow for better sharing of data between lab members and/or collaborators. Finally, “Open science” tools like the Open Science Data Cloud allow researchers to access public datasets as well as make their own data available to collaborators across the planet. Data visualization is a trendy new field that revolves around transforming raw numbers into thought provoking graphics. Journalist organizations, both traditional and web-based ones, need people who are familiar with turning complex scientific ideas or large data sets into attractive and informative images. Professional biostatisticians and bioinformaticists also should be able to communicate their complex results to diverse audiences. Plotly has a Python library all about visualizations that might be a good place to start. While not every job outside of academia will require a PhD and each discipline has its own set of heavily used programs, it would be unreasonable to try and learn them all. However, the simple act of independently learning a new skill demonstrates that you are driven to learn and can readily adapt to whatever technology is presented to you. Data visualization is a trendy new field that revolves around transforming raw numbers into thought provoking graphics. Journalist organizations, both traditional and web-based ones, need people who are familiar with turning complex scientific ideas or large data sets into attractive and informative images. Professional biostatisticians and bioinformaticists also should be able to communicate their complex results to diverse audiences. Plotly has a Python library all about visualizations that might be a good place to start. While not every job outside of academia will require a PhD and each discipline has its own set of heavily used programs, it would be unreasonable to try and learn them all. However, the simple act of independently learning a new skill demonstrates that you are driven to learn and can readily adapt to whatever technology is presented to you. How to Get StartedOne option is to ask your PI if they or your research group has a website. If they do not, help make one to showcase your lab’s current research and makes it accessible to potential new members browsing the web. You can also make a website for yourself. This can serve as both an online resume and tangible display of your HTML/CSS abilities. A completed website gives you a concrete “finish line” to your independent study. Plus with websites like Squarespace creating a new website is easier than ever.

You could enroll in a computing class at your university or seek out online courses. Codecademy has free lessons on programming basics and website design. Another potential avenue for learning is Software Carpentry.Software Carpentry is a global network of courses and instructors that seek to teach basic computational workflow skills to scientists. Classes like these can help you to start thinking like a programmer and open your mind to potential uses for code in your own research. These are not your only options. You could make a video of one of your laboratory methodologies for YouTube. Learn a new program to calculate ANOVAs. Start a podcast with members of your lab. Ask someone in another lab if they can show you what software they use. It doesn’t have to be a huge time commitment. You can get a free week-long trial at Lynda.com where they have hundreds of videos on cutting edge software, but you have to start somewhere. Even practicing some of the advanced functions in Excel can go a long way towards being an effective, tech-savvy scientist. Is this list comprehensive? No. If you learn a new skill are you guaranteed a job? No. But computers will only become a more integral part of quality science. With a toolbox full of computer based skills you will be able to more nimbly assert yourself in the job market and the scientific community. The key is to try something new that you find interesting and maybe you can turn a hobby into a new career. Additional Information http://www.nature.com/nbt/journal/v31/n11/full/nbt.2740.html http://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.1001745 |

Archives

July 2023

Categories

All

|

RSS Feed

RSS Feed